Design automation for real-time/synchronous interfaces in Dynamics 365 for Finance has its challenges but it’s quite often less complex than do the same for batch/asynchronous interfaces, for a couple reasons. On batch interfaces we need to manipulate files across servers, be aware of network traffic, quotas, operation timeouts, storage limits and costs, and so on.

While consuming OData endpoints are relatively simple – get a token, call the service, receive the response, with Data Management Framework APIs isn’t that straight forward and requires additional steps to set your project, upload/enqueue the file, execute, wait, and if necessary request execution status in loop until it’s finished, so we can send a response/acknowledgement to the source system.

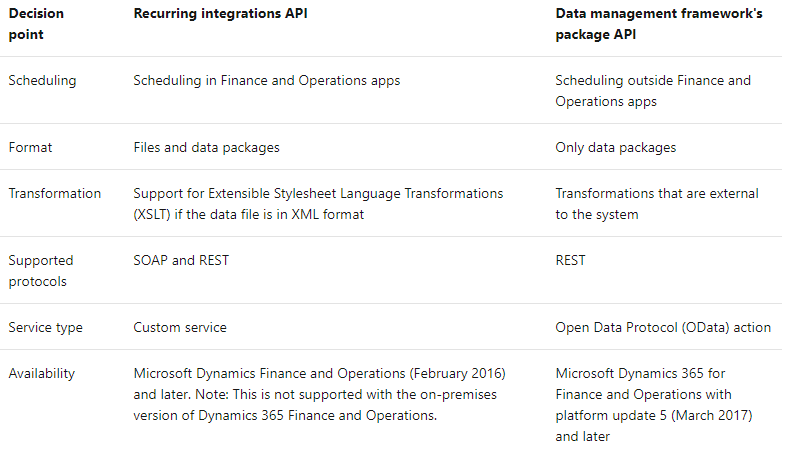

There are two APIs available to automate the batch integrations using Data Management Framework, and these are they key differences:

Choosing the best API, patterns and tools depends of each scenario and can be confusing with so many options available.

In this post I’ll describe how to use Data Package API, Business Events and Azure Functions to build an event-based package import process automation.

Why Azure Functions over Logic Apps?

Both are great options and the choice depends of how much flexibility and control you want, versus how much code you’re willing to write. But a key difference made me choose Azure Functions for this particular task: its event-based triggers, while Logic Apps works with polling-based triggers.

For simple integration scenarios it does not make a big difference, however, as the complexity increases and we add in the picture multiple legacy systems generating data in a non-linear recurrence to be loaded near real-time, every execution counts as it can be quite costly and inefficient design you middleware to poll multiple sources in a pre-defined scheduling.

Another point to consider – as the communication between the middleware and Data Package API requires many calls in sequence, having control over each step and the possibility to apply different patterns – like throttling, circuit breaker, retry patterns, and others – is essential.

Solution overview

For the sake of simplicity, we’ll skip the data extraction and transformation steps and I’ll assume you have a DMF import project in place and a sample data package ready. Please note the import project category must be set to “Integration” – the reason is explained later in this post.

The package must be uploaded to a persistent storage to trigger the Function and start the import process. Azure Storage Blob works quite well for that purpose as it’s fully integrated with other Azure tools, offering redundancy (LRS, ZRS, GRS/RA-GRS), low latency and access via REST APIs.

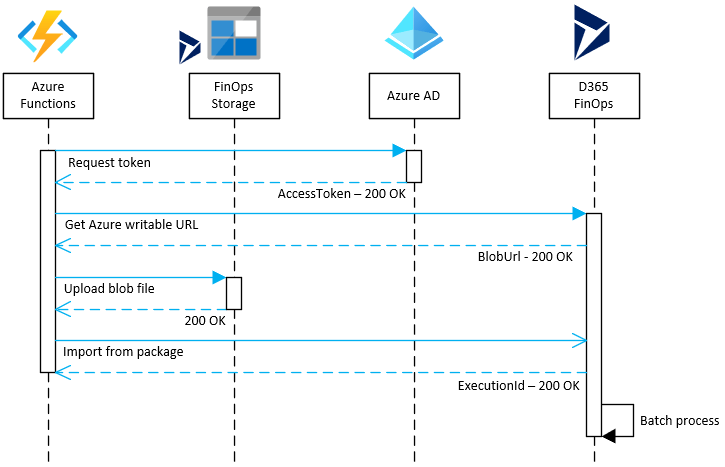

Once the zip package is uploaded to Storage Blob (1), the Function app is triggered and a stream connection is opened to download the blob file (2), initiating the import process flow to Data Package API (3).

A sample function app containing the code to perform the operations mentioned above can be downloaded from the GitHub repository below (please note it’s a basic sample for learning purposes only):

https://github.com/ffilardi/fo-datapackageimport

The function is triggered when a new file (.zip) is uploaded to a storage account in a container named “package-import“. Update the local.settings.json file with the required parameters, and make sure these parameters are deployed as application settings variables when publishing to Azure.

Once the function is triggered, four calls are required to start the import process to FinOps. Here’s the sequence flow:

Additional calls may be made to request the job execution status update. That means polling-based recurring requests until the execution finishes. To avoid that we’ll adopt an event-based approach using the Business Events framework and a HTTP triggered function, inserting the acknowledgement data in a log table for reporting and management.

Acknowledgement events

The idea is similar to the export scenarios using DMF and Business Events, as explained in a previous blog post here. But in this case we’ll build events that are triggered when import jobs are finished.

Azure Table storage provides a NoSQL key-value store, handling massive semi-structured datasets. It’s a good fit for logging solution, providing a schemaless design, possibility to perform OData-based queries, easily consumed by many BI and reporting tools.

With a variety of out-of-the-box data connectors, for PowerBI is one of best choice to query, filter, visualize and interact with the data by connecting directly to Azure Storage Table to create reports and real-time dashboards.

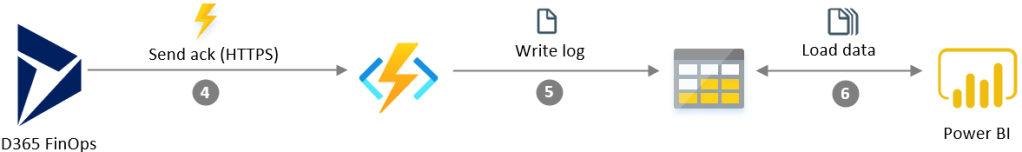

Back to the process, once the import job is complete a Business Event notification is sent to Azure Function via HTTPS endpoint (4), containing execution status and other metadata. This information is then saved (5) into the Azure Storage Table. The data is then ready to be consumed by PowerBI (6).

In a nutshell, the custom business event listen for insert actions on table DMFDefinitionGroupExecutionHistory – where new records are created when an import project starts its execution and when it finishes. It will also filter the projects by category (Integration) and operation type (Import). The reason for that is to avoid catch events for manually imported files via user interfaces (projects using other categories).

The business event extension requires the implementation of 3 classes:

- Event handler class: define the trigger and filters to get the event/data.

- Event contract class: define the content for the notification message payload.

- Event class: the event itself, called by the handler class to initialize/populate the contract and apply any business logic (if/when required).

A sample implementation is available here:

https://github.com/ffilardi/D365FO-DMFImportSatusBusinessEvent

Just copy the files to your model, run a code build and once it’s done, rebuild the business event catalog in the Business events catalog form under System administration.

The new event must be activated using a HTTP endpoint. To learn how to do that just follow the instructions from my previous post here.

And finally, to receive and process the event, a HTTP triggered function endpoint receives the business event payload, extracts the relevant data and transforms into a document to be inserted into a Storage Table. A sample implementation to be used as a guideline is available as part of the same repository/project mentioned above – check here.

Reporting and management

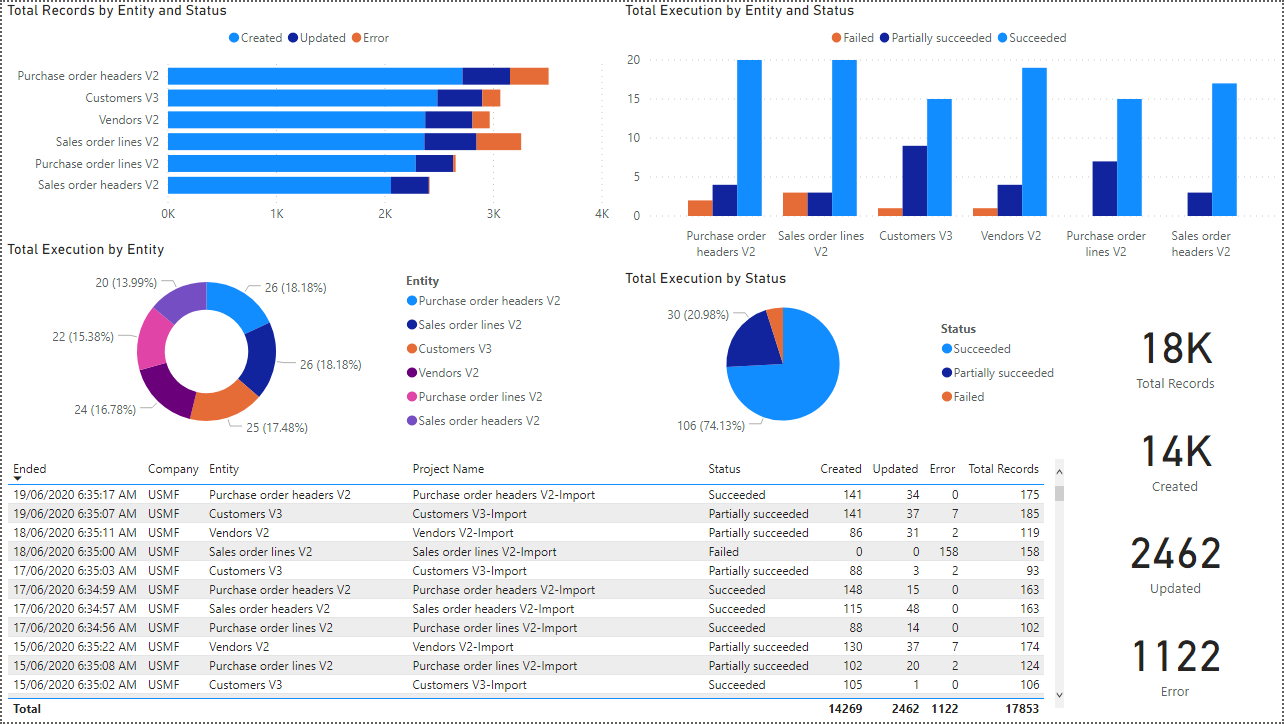

The following fields are extracted from Business Event payload and written to the log table:

- Entity (PartitionKey)

- Event Id (RowKey)

- Business Event Id

- Project Name

- Project Description

- Execution Id

- Company (Legal Entity)

- Total Records

- Total Created Records

- Total Updated Records

- Total Error Records

- Operation Type

- Project Category

- Started Date & Time

- End Date & Time

- Status

Based on that information we can build dashboards and reports in PowerBI to monitor the import results, errors, record counts and so on.

PowerBI dashboard – Click to enlarge

Wrapping up

There are many tools, patterns and ways to build an automation solution for batch integrations with FinOps. There’s no right or wrong approach, we must find the most appropriate for each case.

In a cloud-first world, leverage the scalability, availability, security and out-of-box features provided by SaaS and PaaS solutions, and adopting a serverless and event-based architecture in a pay-per-use model are key components to consider for any integration scenario, allowing us to focus on our business solutions instead of creating the software stack needed to implement it.

Great stuff Fabio!

A small spelling mistake:

In this post I’ll describe how to use Data Package API, Business Events and Azure Functions to build an event-based package import process automation.

Could you elaborate a bit on your comment:

But a key difference made me choose Azure Functions for this particular task: its event-based triggers, while Logic Apps works with polling-based triggers.

From my understanding, you should be able to get a real time event based implementation from Blob inserts via Event Grid, so that you don’t have to implement a polling pattern in your Logic App. I acknowledge that there are other factors in your design that gains from being in an Azure Function vs. Logic App, but I feel that the comment about Logic App being a polling pattern is a bit simplistic.

From the Storage Account you can actually configure a new Logic App via a wizard styled guide, where you configure the Storage Account connection and the Event Grid event, and then you will have an empty Logic App to start working from.

LikeLiked by 1 person

Thanks Mötz, typo fixed. About the Logic Apps, yes, you’re right. There are many ways to design it and using Event Grid is one of them. But the comparison was between LA and Functions only, without add any additional tool in the picture. As the focus of the article is automate the DMF import process, I’ve tried to simplify the steps before (and after) as much as possible. I had Event Grid, Service Bus, LA and Cosmos DB as part of the initial end-to-end solution, but the article was getting way too long and boring.

Maybe on next posts I can try to explore other specific scenarios… And, I must admit, my opinion is biased in favour of Functions as I love coding more than drag-n-drop boxes. 🙂

LikeLiked by 1 person

I know all to well the struggle to keep things simple and easy, while exploring the options. I just wanted you make sure that your readers didn’t get a feeling that Logic App is a lesser tool, only supporting a polling pattern. If they read the post, we can hope they read the comments and that way learn what other options they have.

You should challenge yourself to blog about the other tools as well, to help your readers have a broader perspective and help them pick the right components. Even if you’re biased, and the post might conclude that the a Logic App isn’t the best fit, it will still prove valuable for the reader 😉

LikeLike

That’s the point we probably disagree, Mötz. It’s not a wrong statement to say that Logic Apps by itself only supports a polling pattern. Unless you introduce Event Grid, adding extra costs and management effort that your client might not be willing to pay for.

It’s also not a wrong statement to say it’s a lesser tool in several aspects comparing to Functions. It does offer a few advantages though, and it’s very common to have both in the same solution playing different roles. In the comparison I’ve mentioned the reasons why I chose Functions over LA for this case, not saying LA isn’t not valuable.

There’s no perfect tool or perfect pattern, and I believe the post conclusion addresses exactly that point. I wish I had more time to post more cases, too many challenges in my day already… Hope the readers check the comments. Good stuff, mate. 🙂

LikeLike

Hi Fabio, great article..why would you use this method over ” export scenarios using DMF and Business Events” you posted in another blog post?

LikeLike

Thanks Ray. Not sure which other post you’re referring to, but this one is an import scenario.

LikeLike

Hi Fabio, great post. Why would you use this method over “DMF and Business Events” blog post you referenced earlier?

LikeLike